Ayn Rand was quite fond of tables.

The central idea of Randian epistemology is that abstract concepts can be logically anchored to observed reality by the process of “measurement omission,” where a concept is defined by the key features its referents possess, regardless of the precise dimensions of those features. Rand’s typical example of this was the concept table, which refers to any flat, level surface with one or more supports underneath it, designed to hold other objects. Apart from some constraints on the ranges on those values, it’s not necessary to know how precisely high the supports are, or how long or wide the surface is, in order to identify an object as a table.

Looked at another way, this framing also implies that you can pick arbitrary values for those features, and imagine or build a completely valid table based on them, even if you’ve never seen a table with that particular combination of values before. And this has parallels to the idea of “latent space” which has been popularized by recent trends in deep machine learning. The set containing all possible images of tables is much smaller than the set of all possible images without constraints (which mostly contains images of random noise). If you were able to make a diagram of the inconceivably high-dimensional space of all possible images, and plot the points representing valid images of tables within that space, you’d see them cluster around complicated squiggles (“manifolds”) which correspond to the axes along which images of tables are permitted to vary. e.g., it might have axes for length and width of the surface, number of supports, length of supports, and some other stuff like camera angle, lighting, and color. You can then posit a function mapping points in all-images-space into points into the much smaller (but still inconceivably complex) space containing only images of tables that runs along that manifold.

So, one strategy for generating novel images of tables would be to take a deep neural network that inputs and outputs images of a certain size, and has a set of inner “hidden” layers that are significantly smaller, feed a bunch of images of tables through that network, and optimize the weights of that network so that the input and output images are as similar as possible. The result should be that all nonessential information from the input image is discarded, and the innermost hidden layer contains only the essential information necessary to describe and reconstruct this particular table image. If the network has been well-fitted to the training data, then it should be capable of generating any conceivable image of a table based on variations on that essential information. Then, you split the network apart and start feeding random values into the innermost layer, and it should reconstruct plausible-looking images of tables. (This architecture is called an autoencoder.)

In Leonard Peikoff’s Objectivism: The Philosophy of Ayn Rand, the following table-related exchange is recounted:

“Suppose”—a well-known professor of philosophy asked Miss Rand years ago—”someone invents a ‘hanging table’: an object with a flat, level surface designed to hold other objects, but which hangs from the ceiling by chains, rather than resting on legs on the floor. Is it ‘really’ a table or not? And how could anyone know?”

Precisely because the “hanger” is borderline, Miss Rand replied, one has several options. Since the entity does have some significant similarities to tables, one may choose to subsume it under that concept (which would require a contextual alteration in one’s definition of “table”). Or: since the entity does have some significant differences from tables, one may form a new concept to designate it. Or: since the entity is not widespread and is of no importance in regard to further cognition, one may and probably would choose neither option. One need not designate it by any one concept, old or new, but may identify it instead by a descriptive phrase, which is exactly what the professor did in posing his question.

Which is all very well and good for preserving the foundation of conceptual epistemology in objective reality. But, this response does kind of gloss over an important part of the professor’s question: “How could anyone know?” If we’ve decided to quibble over whether this thing fits the definition of “table” or not (as opposed to the hundred thousand other definitions in the dictionary), or how we need to modify the definition of “table” to accommodate it, then we’re already pretty darn sure that it’s probably a table, regardless of the definition. How can that be?

Maybe we were simply wrong in choosing vertical supports as an essential feature of tables, and it’s really just the flat, level surface that matters? But it’s at least conceivable that in unusual circumstances like zero-gravity or artificial-gravity environments, there could be angled surfaces or spherical objects that would still be recognizable as tables. So what’s left that can be the true essence of “table”?

Well, what’s left is that they are “designed to hold other objects.”

Useful here is the idea of “disguised queries,” from Eliezer Yudkowsky’s essay of the same name. Words and concepts, in Yudkowsky’s epistemological model, are tools for drawing probabilistic inferences about the hidden properties of an object based on its visible properties. If you want to know whether an object is a “rube” or a “blegg,” it’s probably because you’re trying to mine vanadium from its innards. Similarly, if you’re wondering whether an object is a “table” or not, and you’re not a complete nerd who reads dictionaries for fun, it’s probably because you’re at a party and looking for something you can put your drink on. In the real world, you can make predictions on that topic fairly reliably based on features like flat surfaces and vertical supports. In a world where hanging tables or zero-gravity tables are common, they’d be less useful for that purpose.

An interesting consequence of Yudkowsky’s description of concepts as probability flows is that it allows you to make inferences in reverse as well. Given the concept of a hanging table or a zero-gravity table, and some knowledge about the constraints of physics, you can form a fairly detailed image in your mind of how that object would look and what features it would have. You can predict, for example, that a hanging table likely wouldn’t have vertical legs dangling in the air underneath it. Given the concept of a triangular lightbulb (another example from Yudkowsky’s sequences) and a bit of knowledge of electrical wiring and glass production, you can predict that it would likely be the glass part rather than the metal part that is triangular, and that the corners and edges would be rounded rather than sharp.

Suppose you’ve trained a neural network to generate perfect images of tables based on points along the latent manifold of surface and support dimensions. It’ll probably be capable of generating a reasonable picture of any table you’ve ever seen. If it’s well-trained enough, it might even be able to generate pictures of highly improbable tables, like tables thousands of feet high with a surface the size of a postage stamp, or tables with hundreds of supports underneath them. But, no matter what point in that latent space you choose, it will never, ever generate a picture of a “hanging table.”

Real-world image generators don’t work quite like that, of course. The encodings they settle on for images rarely correspond to human-readable features like “area of table surface” or “number of vertical supports.” So it’s possible, in principle, that a reasonable image of a hanging table might exist somewhere in the latent space of one of these generators.

But for that to be the case, it would probably need to learn that feature of “designed to hold other objects”-ness somehow, and simulate something like the reversed reasoning process that a human brain would use to remove the useless legs from its image of a hanging table.

That means, I think, that it might be possible to gauge whether an image generation network is incorporating real-world knowledges in its images, as opposed to just statistical relationships of pixels, based on whether it includes legs when prompted to generate images of hanging tables. (Assuming that its training data didn’t include real images of hanging tables, a few of which do exist on the web.) And, I strongly suspect that the top image generation models as of this writing (early 2023) will all include vestigial table legs in their images.

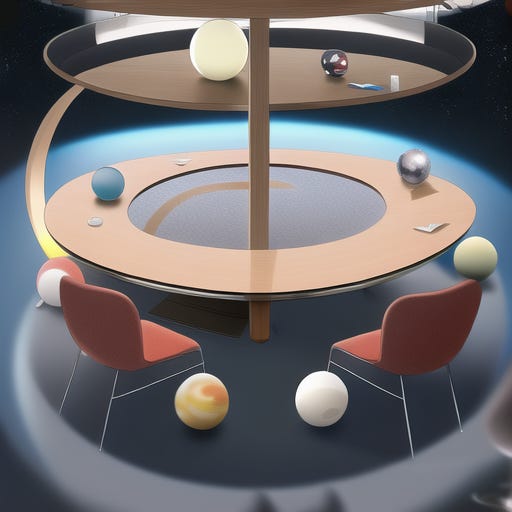

With preregistration out of the way, let us test that expectation.

A couple of these are better than I expected. The third one from DALL-E kind of looks like it got the idea; it still appears to have legs, but at least the legs are pointing upwards and connected to a pole or something. (Although it’s a bit too small to do much object-supporting.) The last two from Stable Diffusion are kind of going in that direction as well. They’re cropped at exactly the right place to make it hard to tell whether the algorithm was filling in vertical supports all the way to the floor, but the fourth one might be strictly hanging. Most of the rest, of course, are just peculiar Escherian blends of the concepts of “table” and “hangyness.”

It is interesting that most of the “good-ish” images here all use basically the same design of a small round surface suspended by wooden rods attached to a single point, forming a triangle. It’s a design I didn’t expect, at least; my expectation of a “hanging table” would be a rectangle with a vertical chain at each corner, for maximum stability. But the fact that the same theme is used repeatedly in both models makes me suspect that there might be an image in the training corpus somewhere that explicitly associates a design like that with the specific phrase “hanging table.”

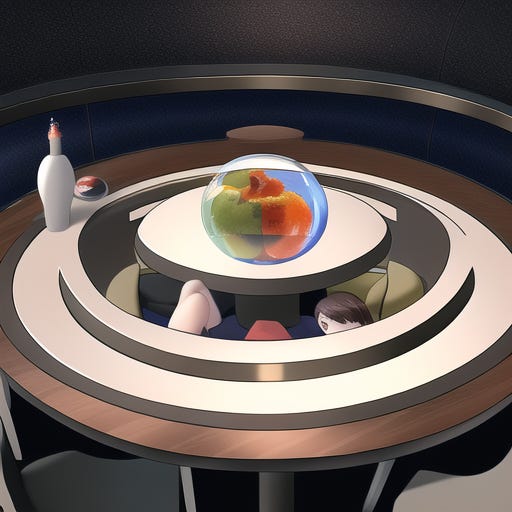

I also tested the prompt on NovelAI:

It did surprisingly well, despite being optimized for producing anime images of naked waifus! I really can’t complain about that second one in the “full training set” results. It’s vaguely physically plausible if a little bit unstable-looking, and it’s not a terrible way to actually use a hanging table as a design feature in a real room. It’s also distinct from the basket-like design that most of the other images seem to tend towards. It’s still notable that it’s presented in the context of a larger, normal table, though.

Note that I did run through a few more images from NovelAI, trying to settle on some consistent parameters, so the fact that it came up with one reasonable result may reflect a larger sample size.

Let’s try a longer descriptive phrase.

DALL-E got pretty close with that third one. It’s not really clear why the chains are continuing below the table, though. And, again, it’s doing the thing where it crops it very carefully so you can’t see what’s going on in the space between the table’s surface and the floor.

The others don’t do much with this prompt, which supports my suspicion that their better results previously were based on some real image in the training data.

So let’s see what they do with something more farfetched.

Nope, nope, nope. Some of these are pretty visually interesting images, but not one of them even attempted to make the table spherical or floating.

Here, we’re probably running up against the difficulty these language models have with compositionality. The system doesn’t see a hierarchical linguistic structure that associates the words “spherical” and “floating” specifically with the table; it just sees a vague blob of words that has all those elements in there somewhere. And because its training set presumably contains absolutely zero images of spherical floating tables, it dismisses that connection between words as improbable and locks onto a more statistically probable but grammatically invalid interpretation.

I’m not sure if solving compositionality is a necessary prerequisite for solving the “hanging table” problem, but it’s sure going to be hard to author novel prompts if you can’t rely on compositionality to work.

Just for the sake of argument, I’ll try one test that eliminates some of that burdensome context business and includes only the key concepts “spherical” and “table”:

Well, that’s a little more creative, at least. And I guess you could maybe generously interpret this as staying true to the actual purpose of tables, within the limits of Earth’s gravity.

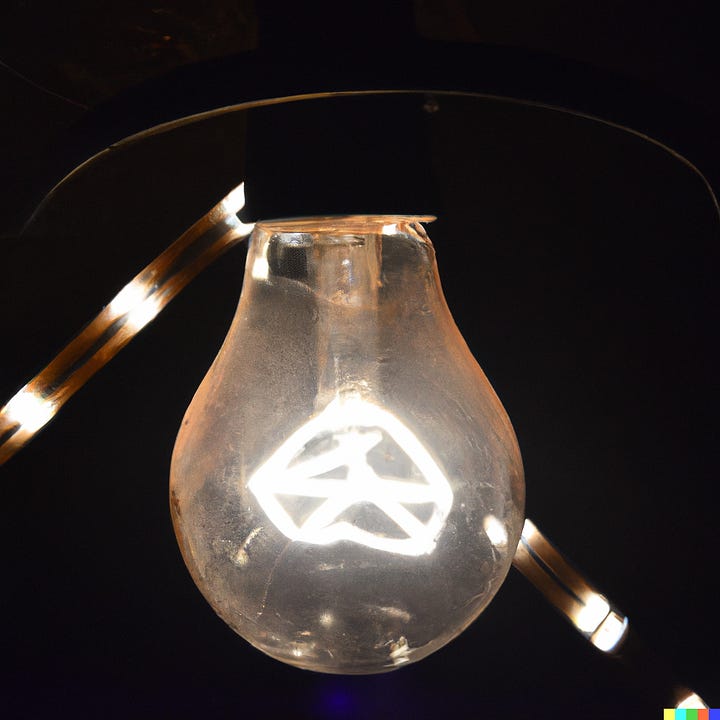

Anyway. Just one more test.

Nope, not a whole lot here either. Stable Diffusion at least made a reasonable effort. Its third image does draw a normal-looking tapered bulb with a perspective that makes it look plausibly triangular in cross-section, but I don’t think a normal human would guess that it was supposed to be “triangular” from that image. (Humans are more likely to imagine rotating that shape in 3D and call it “conical” or something. I’d expect a “triangular lightbulb” drawn by a human artist to emphasize the three-dimensional triangularity by either drawing a pyramid or a triangular prism.) That weird LED-strip-looking thing in the first Stable Diffusion image is also an interesting interpretation.

Some conclusions:

These were a little better than I thought they would be overall. The “spherical table” problem is the only one that really didn’t come anywhere close to the desired result.

I’m fairly confident that there’s probably a real “hanging table” or two in the training data, so that specific prompt is probably not a great indicator of anything.

Given a weird prompt, there seems to be a tendency for these algorithms to use tricks with camera angles to cover up the weirdness and thereby reduce the overall improbability of the image. Hence the images that crop out the empty space underneath a hanging table, or relatively normal-looking lightbulbs that have a vaguely triangular cross-section. Whereas I think human artists will recognize that the improbability is the point of the prompt and do their best to make sure that the result is unmistakably weird. I’m not sure how strong that tendency is or if there’s anything useful you can conclude from it, though.

Since these algorithms still can’t reliably interpret grammar correctly, adding more contextual detail to the prompt just seems to give them more opportunities to reduce weirdness. Keeping prompts shorter and more focused on the unexpected object itself seems to retain the weirdness of the object better.